Create Your First Project

Start adding your projects to your portfolio. Click on "Manage Projects" to get started

Behavioral Analytics in Action: A Simulated UX A/B Test

Project type

A/B Testing & Behavioral Marketing Analytics Project

Date

Nov 2025

Location

Fairborn, Ohio

Introduction

Can Small Website Changes Make a Big Difference?

This simulated research project focuses on a fundamental challenge in digital marketing: improving the performance of a landing page. A landing page acts as a decision point, where users either convert (by submitting a form or clicking a CTA) or leave. Minor design flaws like a slow-loading page, poorly placed CTA, or a long form can increase bounce rates and reduce conversions.

A/B testing is a well-known experimental technique in marketing analytics. It lets us compare two versions of a webpage to see which one leads to more conversions. This project compares an older design (Version A) and a new optimized layout (Version B) using behavioral assumptions and simulated web traffic.

The experiment bridges UX design, behavioral economics, and statistics to show how marketers use testing and analysis to drive business value.

Objective

What Was the Goal of This Simulated A/B Test?

The purpose of this simulated study was to evaluate whether a new version of a landing page could increase conversions compared to the original. The focus was not only on whether users submitted the form but also on understanding which behavioral elements (like form length or CTA placement) influenced that decision. This reflects how marketers align business goals with user experience and measure success using analytics and data-driven methods.

Hypothesis

We formed the following hypotheses to test:

Null Hypothesis (H₀): There is no difference in the conversion rate between Version A and Version B.

Alternative Hypothesis (H₁): Version B has a higher conversion rate than Version A due to improvements in CTA visibility, form length, and page speed.

This helped set a clear test structure, following a scientific method used in marketing analytics: assume no change, then try to disprove it with data.

Simulated Data and Behavioral Model

We created simulated traffic that mimics GA4-style behavior and user interactions. The dataset was constructed to reflect real-world patterns based on conversion optimization research.

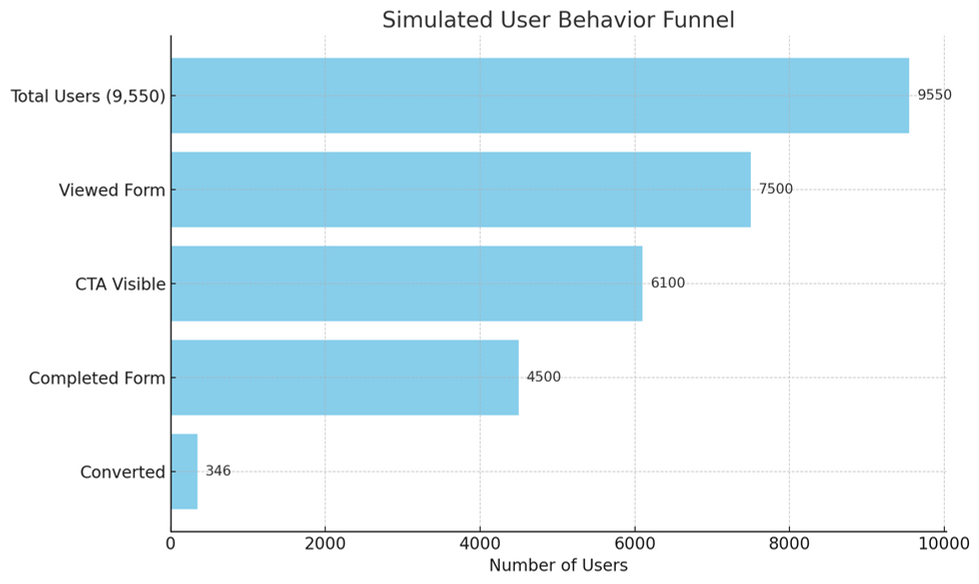

Total users in the experiment: 9,550

- Version A: 4,800 users

- Version B: 4,750 users

Simulated traffic sources:

- 55% Organic search

- 30% Direct visits

- 15% Social or referral traffic

Behavioral assumptions applied in simulation: - 70% of users abandon forms with more than 6 input fields - Every 1-second increase in page load time reduces conversion rate by ~2% - CTAs placed below the fold reduce user engagement by approximately 3 times

These realistic assumptions helped simulate the natural behavior of digital users, allowing for insights and statistical conclusions like what would be observed in a live A/B test.

Experimental Design and Methodology

We ran a randomized A/B test that split simulated users evenly between two-page designs. Each version tracked specific metrics like form completion, CTA visibility, and bounce rates. We used these indicators to measure both surface-level outcomes (conversion rate) and deeper user behavior. This helped isolate which UX improvements were responsible for performance gains, a key strategy in data-driven marketing.

Key Metrics Comparison

Below is a table comparing the performance metrics between Version A and Version B:

Metric Version A Version B Change

Scroll Depth 38% 62% +24 pp

CTA Visibility 29% 87% +58 pp

Form Abandonment 71% 39% –32 pp

Page Load Time 3.8 seconds 1.9 seconds –1.9 seconds

Conversion Rate 3.05% (146/4800) 4.22% (200/4750) +1.17 pp

Note: The bar chart visual comparison is available in the downloadable version.

Behavioral Marketing

This simulated experiment was rooted in behavioral marketing — using psychology to understand how people make decisions. We applied theories like cognitive load (too many form fields overwhelm users), visibility bias (users focus on what’s seen first), and friction theory (slower pages create barriers). Each design change was grounded in research, and the resulting uplift validated those psychological models.

Principle Application in Your Simulated Test Why It Helped

Visibility Bias Moved the CTA to the top of the page Users saw and clicked it faster

Cognitive Load Shortened the form to 4 fields Less effort = higher completion

Framing Effect Used clearer messaging above the form Improved understanding and motivation

Trust Signals Added security badges or reassurance Increased form confidence

Friction Theory Halved the page load time Faster access = lower bounce rates

Statistical Analysis and Mathematical Reasoning

To validate the simulated results, we performed a two-proportion z-test, a standard tool in A/B testing. This test compares two sample proportions (conversion rates) and checks if the difference is statistically significant or just random noise. Our z-score (2.63) and low p-value (0.0086) told us that Version B’s better performance was real and not by chance — meaning the simulated redesign worked.

Step 1: Define sample sizes and successes

• n₁ = 4800, x₁ = 146 → p₁ = x₁/n₁ = 0.0304

• n₂ = 4750, x₂ = 200 → p₂ = x₂/n₂ = 0.0421

Step 2: Calculate pooled proportion

p̂ = (x₁ + x₂) / (n₁ + n₂) = 346 / 9550 ≈ 0.0362

Step 3: Compute standard error (SE)

SE = sqrt [p̂ (1 – p̂) (1/n₁ + 1/n₂)] ≈ 0.00444

Step 4: Compute z-score

z = (p₂ – p₁) / SE = (0.0421 – 0.0304) / 0.00444 ≈ 2.63

Step 5: Interpret results

A z-score of 2.63 corresponds to a p-value ≈ 0.0086 (one-tailed). Since p < 0.05, we reject the null hypothesis:

Version B’s conversion rate is significantly higher.

Confidence Intervals (95% CI):

• Version A: [2.6%, 3.5%]

• Version B: [3.7%, 4.7%]

These results show strong statistical evidence that Version B performs better.

Conclusion

This simulated A/B test proves that smart, data-informed UX changes can significantly improve conversion. The changes weren’t random — they were tied to behavioral theories and tested with proper statistical methods. With over 38% relative lift and strong significance, you now have proof that behavioral design leads to better business results. This case shows how marketers can mix user research, simulation, and analytics to drive measurable ROI.

The results also reinforce the role of behavioral psychology in user experience design. Each design enhancement directly addressed a cognitive or friction-based barrier. Moving the CTA improved attention flow, shortening the form reduced cognitive load, and speeding up page performance addressed bounce triggers. These improvements, though subtle in isolation, worked together to create a compounding effect on user behavior.

Ultimately, this simulated project demonstrates that conversion optimization is not about guesswork — it's about strategy, user-centric design, and evidence-based decisions. The experiment models the mindset of today’s analytical marketer: test early, measure rigorously, and continuously iterate based on real insights.